SkinView iOS App for Identifying Skin Cancer with Computer Vision

A mole is a benign (non-cancerous) skin tumor. Almost everyone has from 30 to 60 moles on their body. And nearly all of these moles are harmless. But some types of moles are slightly more likely to develop into melanoma than other types of moles. If a mole has the characteristics of the ABCDEs (Asymmetry, Border, Color, Diameter, and Evolution) of melanoma it should be checked by a dermatologist.

Request

GP2U Telehealth, an Australia-based GP online clinic, came up with the idea for the SkinView app. SkinView uses a disposable device that clips on to a smartphone and turns it into a digital dermatoscope. The application allows users to receive a skin cancer diagnosis without having to visit a doctor's office and pay the fee. When GP2U Telehealth turned to Integra Sources, the application was already under development. We were hired to port the app from Python to C++ and improve the quality of the computer vision algorithms.

Solution

We ported the SkinView app to C++ but our collaboration with GP2U didn't stop there. Our client was so impressed with the quality of our work and development approach that he decided to continue working with us on enhancing the accuracy of melanoma detection and optimizing the iOS app performance.

Scope of work

- Mobile app development. We ported the code from Python to C++ and integrated the C++ code to the iOS application.

- Computer vision. We built unique algorithms for melanoma detection based on OpenCV.

How it works

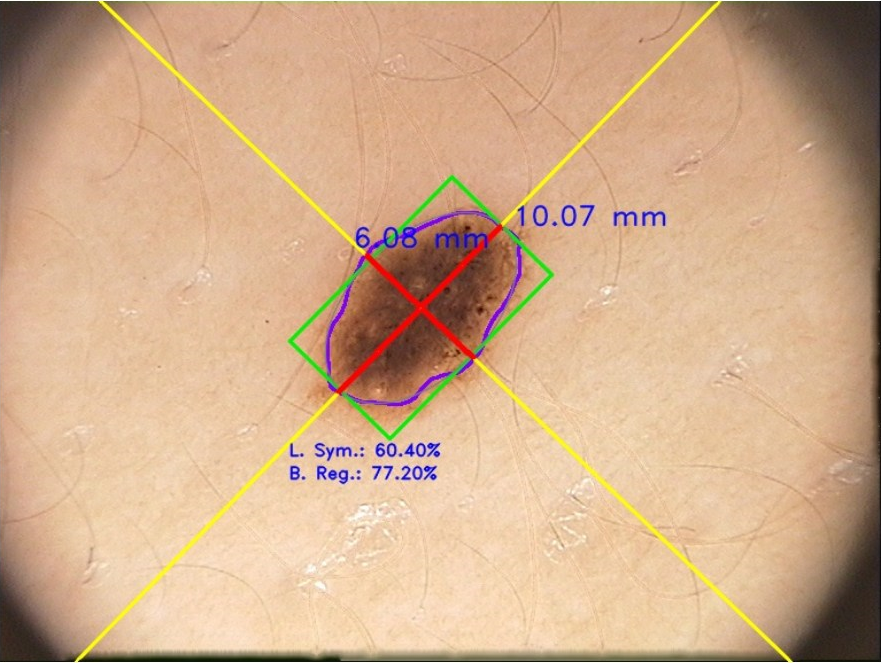

- A user puts a special lens on their smartphone camera and takes a picture of the mole with the SkinView iOS application.

- The application analyzes the mole and tells the user whether it is malignant or not.

- The images with suspected skin cancer are sent to a specialist for diagnosis.

Computer vision algorithms

To create an intelligent system for detecting skin cancer with a smartphone, we could have used two methods. Either image processing technology based on OpenCV, or machine learning algorithms that learn from data.

Using machine learning algorithms would've required long processing times. And we needed the app to give back the results very fast, about 10-100 milliseconds after the photo was taken. That's why we went with computer vision to process the photos taken by a smartphone. The algorithms we developed provide automated diagnosis of skin cancer at any time and for free. Here is how they work:

Dermatologists consider the following ABCDEs characteristics to check moles for skin cancer:

- A - Asymmetry: the mole is irregular, or not symmetrical in shape

- B - Border: ragged, blurred, or irregular borders or edges of the mole

- C - Color: more than one or uneven distribution of color

- D - Diameter: a large (greater than 6mm) diameter

- E - Evolution: the mole is changing in size, shape, or color with time.

The main job of our computer vision algorithms was to find the edges of the mole and calculate ABCDE parameters.

We used histogram analysis, contour detection, color filters, image overlay, and other methods. To implement proper mole border extraction we built the following algorithms:

- Color balancing that represents Adobe Photoshop's technique used in "auto levels"

- Unsharp masking function

- Sharpening filter

- Gamma correction for working with subchannels of the source image

- Automatic brightness and contrast optimization with optional histogram clipping

- White balancing

- Custom k-means clustering with a predefined color set.

We also implemented different techniques for removing hairs, vignette effect, and flashlights from the image.

With our complex but efficient algorithms, we increased the accuracy of skin cancer detection to 80%, compared to the 30% accuracy we faced at the start of the project. And we achieved our goal of 10-100 milliseconds speed of image processing on iPhones.

Technologies Used

- Python language in combination with the scikit-image library has been used for receiving PoC.

- C++ language in a compartment with OpenCV library has been used for algorithms converting from Python language.

- Objective-C has been used for iOS mobile application development.

- Algorithms developed with the help of C++ have been wrapped by Objective-C and integrated into the iOS mobile application.

Result

We significantly improved the algorithms for image processing and recognition. The melanoma diagnostic accuracy of our algorithms increased from 30% to 80%. Despite the complexity of the algorithms, we managed to decrease the processing time of the data to less than 0,1 seconds while the original version of the app required 4-6 seconds to process the image and show results.

SkinView became one of the two winners of the Murdoch Children's Research Institute Bytes4Health competition in November 2016. We continued working with the GP2U Telehealth company on another project.

Melanoma diagnostic accuracy

Award-winning project at

Data processing time

Integra Sources are great to work with and highly skilled. Definitely A graders.

You might also like...

Real-Time Signal Processing for Wearable Electrocardiogram Device

We developed an Android app and firmware for a wearable ECG device for the University of East London, which did research to quantify the cardiovascular effects of environmental noise exposure

LEARN MORE

Optical Character Recognition Module for Invoice Capture Software

The OCR module makes it possible to extract information including the invoice date, number, the total sum of the purchase, and line-items.

LEARN MORE